System.Numerics.Vector is a library provided by .Net (as a nuget package), which tries to leverage SIMD instruction on target hardware. It exposes a few value types, such as Vector<T>, which are recognized by RyuJIT as intrinsics.

Supported intrinsics are listed in the core-clr github repository.

This allows C# SIMD acceleration, as long as code is modified to use these intrinsic types, instead of scalar floating point elements.

On the other hand, Hybridizer aims to provide those benefits without being intrusive in the code (only metadata is required).

We naturally wanted to test if System.Numerics.Vector delivers good performance, compared to Hybridizer.

We measured that Numerics.Vector provides good speed-up over C# code as long as no transcendental function is involved (such as Math.Exp), but still lags behind Hybridizer. Because of the lack of some operators and mathematical functions, Numerics can also generate really slow code (when AVX pipeline is broken). In addition, code modification is a heavy process, and can’t easily be rolled back.

We wrote and ran two benchmarks, and for each of them we have four versions:

- Simple C# scalar code

- Numerics.Vector

- Simple C# scalar code, hybridized

- Numerics.Vector, hybridized

Processor is a core i7-4770S @ 3.1GHz (max measured turbo in AVX mode being 3.5GHz). Peak flops is 224 GFlop/s, or 112 GCFlop/s, if we count FMA as one (since our processor supports it).

Compute bound benchmark

This is a compute-intensive benchmark. For each element of a large double precision array (8 millions elements: 67MBytes), we iterate twelve times the computation of an exponential’s Taylor expansion (expm1). This is largely enough to enter the compute-bound world, by hiding memory operations latency behind a full bunch of floatin point operations.

Scalar code is simply:

[MethodImpl(MethodImplOptions.AggressiveInlining)]

public static double expm1(double x)

{

return ((((((((((((((15.0 + x)

* x + 210.0)

* x + 2730.0)

* x + 32760.0)

* x + 360360.0)

* x + 3603600.0)

* x + 32432400.0)

* x + 259459200.0)

* x + 1816214400.0)

* x + 10897286400.0)

* x + 54486432000.0)

* x + 217945728000.0)

* x + 653837184000.0)

* x + 1307674368000.0)

* x * 7.6471637318198164759011319857881e-13;

}

[MethodImpl(MethodImplOptions.AggressiveInlining)]

public static double twelve(double x)

{

return expm1(expm1(expm1(expm1(expm1(expm1(expm1(expm1(expm1(expm1(expm1(x)))))))))));

}

on which we added the AggressiveInlining attribute to help RyuJit to merge operations at JIT time.

The Numerics.Vector version of the code is quite the same:

[MethodImpl(MethodImplOptions.AggressiveInlining)]

public static Vector<double> expm1(Vector<double> x)

{

return ((((((((((((((new Vector<double>(15.0) + x)

* x + new Vector<double>(210.0))

* x + new Vector<double>(2730.0))

* x + new Vector<double>(32760.0))

* x + new Vector<double>(360360.0))

* x + new Vector<double>(3603600.0))

* x + new Vector<double>(32432400.0))

* x + new Vector<double>(259459200.0))

* x + new Vector<double>(1816214400.0))

* x + new Vector<double>(10897286400.0))

* x + new Vector<double>(54486432000.0))

* x + new Vector<double>(217945728000.0))

* x + new Vector<double>(653837184000.0))

* x + new Vector<double>(1307674368000.0))

* x * new Vector<double>(7.6471637318198164759011319857881e-13);

}

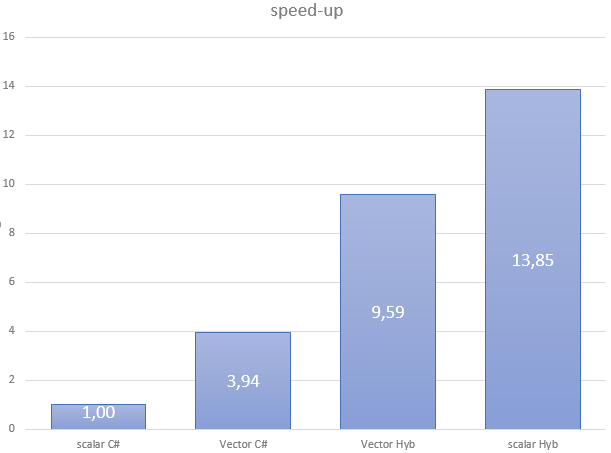

The four versions of this code give the following performance results:

| Flavor | Scalar C# | Vector C# | Vector Hyb | Scalar Hyb |

| GCFlop/s | 4.31 | 19.95 | 41.29 | 59.65 |

As stated, Numerics.Vector delivers a close to 4x speedup from scalar. However, performance is far from what we reach with the Hybridizer. If we look at generated assembly, it’s quite clear why:

vbroadcastsd ymm0,mmword ptr [7FF7C2255B48h] vbroadcastsd ymm1,mmword ptr [7FF7C2255B50h] vbroadcastsd ymm2,mmword ptr [7FF7C2255B58h] vbroadcastsd ymm3,mmword ptr [7FF7C2255B60h] vbroadcastsd ymm4,mmword ptr [7FF7C2255B68h] vbroadcastsd ymm5,mmword ptr [7FF7C2255B70h] vbroadcastsd ymm7,mmword ptr [7FF7C2255B78h] vbroadcastsd ymm8,mmword ptr [7FF7C2255B80h] vaddpd ymm0,ymm0,ymm6 vmulpd ymm0,ymm0,ymm6 vaddpd ymm0,ymm0,ymm1 vmulpd ymm0,ymm0,ymm6 vaddpd ymm0,ymm0,ymm2 vmulpd ymm0,ymm0,ymm6 vaddpd ymm0,ymm0,ymm3 vmulpd ymm0,ymm0,ymm6 vaddpd ymm0,ymm0,ymm4 vmulpd ymm0,ymm0,ymm6 vaddpd ymm0,ymm0,ymm5 vmulpd ymm0,ymm0,ymm6 vaddpd ymm0,ymm0,ymm7 vmulpd ymm0,ymm0,ymm6 vaddpd ymm0,ymm0,ymm8 vmulpd ymm0,ymm0,ymm6 ; repeated

Fused multiply add are not reconstructed, and constant operands are reloaded from constant pool at each expm1 invokation. This leads to high registry pressure (for constants), where memory operands could save some.

Here is what the Hybridizer generates from scalar code:

vaddpd ymm1,ymm0,ymmword ptr [] vfmadd213pd ymm1,ymm0,ymmword ptr [] vfmadd213pd ymm1,ymm0,ymmword ptr [] vfmadd213pd ymm1,ymm0,ymmword ptr [] vfmadd213pd ymm1,ymm0,ymmword ptr [] vfmadd213pd ymm1,ymm0,ymmword ptr [] vfmadd213pd ymm1,ymm0,ymmword ptr [] vfmadd213pd ymm1,ymm0,ymmword ptr [] vfmadd213pd ymm1,ymm0,ymmword ptr [] vfmadd213pd ymm1,ymm0,ymmword ptr [] vfmadd213pd ymm1,ymm0,ymmword ptr [] vfmadd213pd ymm1,ymm0,ymmword ptr [] vfmadd213pd ymm1,ymm0,ymmword ptr [] vfmadd213pd ymm1,ymm0,ymmword ptr [] vmulpd ymm0,ymm0,ymm1<br /> vmulpd ymm0,ymm0,ymmword ptr [] vmovapd ymmword ptr [rsp+0A20h],ymm0 ; repeated

This reconstructs fused multiply-add, and leverages memory operands to save registers.

Why are we not to peak performance (112GCFlops)? That is because Haswell has two pipelines for FMA, and a latency of 5 (see intel intrinsic guide. To reach peak performance, we would need to interleave 2 independant FMA instruction at each cycle. This could be done by reordering instructions, since reorder buffer is not long enough to execute instructions too far in the pipeline. LLVM, our backend compiler, is not capable of such reordering. To get better performance, we unfortunately have to write assembly by hand (which is not exactly what a C# programmer expects to do in the morning).

Invoke transcendentals

In this second benchmark, we need to compute the exponential of all the components of a vector. To do that, we invoke Math.Exp.

Scalar code is:

[EntryPoint]

public static void Apply_scal(double[] d, double[] a, double[] b, double[] c, int start, int stop)

{

int sstart = start + threadIdx.x + blockDim.x * blockIdx.x;

int step = blockDim.x * gridDim.x;

for (int i = sstart; i < stop; i += step)

{

d[i] = a[i] * Math.Exp(b[i]) * Math.Exp(c[i]);

}

}

This function is later called in a

Parallel.For construct.

However, Numerics.Vector does not provide a vectorized exponential function. Therefore, we have to write our own:

[IntrinsicFunction("hybridizer::exp")]

[MethodImpl(MethodImplOptions.AggressiveInlining)]

public static Vector<double> Exp(Vector<double> x)

{

double[] tmp = new double[Vector<double>.Count];

for(int k = 0; k < Vector<double>.Count; ++k)

{

tmp[k] = Math.Exp(x[k]);

}

return new Vector<double>(tmp);

}

As a glance, we can see the problems: each exponential will first break the AVX context (which cost tens of cycles), and trigger 4 function calls instead of one.

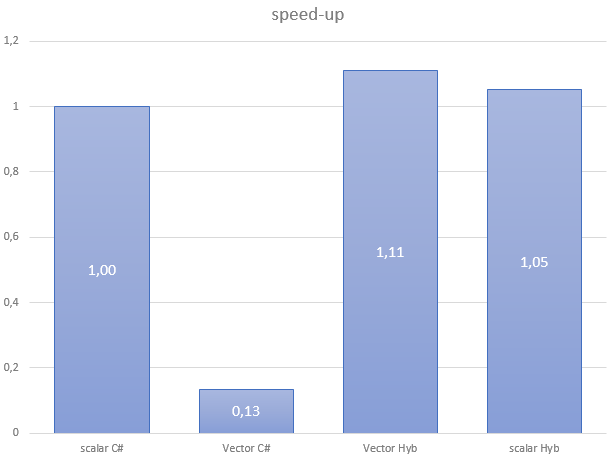

With no surprise, this code performs really badly:

| Flavor | Scalar C# | Vector C# | Vector Hyb | Scalar Hyb |

| GB/s | 13.42 | 1.80 | 14.91 | 14.13 |

If we look at the generated assembly, it confirms what we suspected (context switched, and ymm register splitting):

vextractf128 xmm9,ymm6,1 vextractf128 xmm10,ymm7,1 vextractf128 xmm11,ymm8,1 call 00007FF8127C6B80 // exp vinsertf128 ymm8,ymm8,xmm11,1 vinsertf128 ymm7,ymm7,xmm10,1 vinsertf128 ymm6,ymm6,xmm9,1

Branching

Branch are expressed using if or ternary operators in scalar code. However, those are not available in Numerics.Vector, since the code is manually vectorized.

Branches must be expressed using ConditionalSelect, which leads to code:

public static Vector<double> func(Vector<double> x)

{

Vector<long> mask = Vector.GreaterThan(x, one);

Vector<double> result = Vector.ConditionalSelect(mask, x, one);

return result;

}

As we can see, expressing conditions with Numerics.Vector is not intuitive, intrusive, and bug prone. It’s actually the same as writing AVX compiler intrinsics in C++. On the other hand, Hybridizer supports conditions, which allow you to write the above code this way:

[Kernel]

public static double func(double x)

{

if (x > 1.0)

return x;

return 1.0;

}

Conclusion

Numerics.Vector gives easily reasonable performances on simple code (no branches, no function calls). Speed-up is what we expect (vector unit width) on simple code. However, it’s time-consuming and error-prone to express conditions, and performance is completely broken as soon as some Jitter Intrinsic is missing (such as exponential).